Hollow, world! (Part 3 of 5)

On "solving" the loneliness epidemic and the irresistible illusion of conscious AI

Dear reader, this is part 3 of a 5 part essay series exploring of the philosophical and societal implications of ultra-anthropomorphic AI. If you missed it, read Part 1 here and Part 2 here. Find the link to the next Part at the end of this one.

Synthetic charm and the disruption of human connection

Even if we do not attribute consciousness to these systems, we may be unable to resist feeling that they are conscious.

Anil Seth, British neuroscientist

Imagine, if you will, in the not too distant future, a personal AI assistant who is always with you, perhaps because it’s pinned to your sweater, or discreetly tucked away within the moulded plastic of your glasses. It came fully loaded off the shelf with excellent generic training on an insane number of parameters. And it has also been trained on all of your specific proclivities, learning them from your internet history, all of the videos you’ve watched, text chats you’ve had, your email exchanges, your TV binging habits, and more. It’s not too different to how many social media platforms operate now. Not only that, it has also been observing your real world interactions with real people and places. It notices everything you notice in as you move through the world, and probably more. It knows the type of person you prefer to spend time with and those you don’t, the locations that you find calming, and those that make you tense. It knows this in part because you’ve granted it access to the health sensors in your watch and wherever else you’ve had them embedded, and so it sees subtle shifts in your biometric data that, over time, build a very intimate picture not only of your behaviours, but also a set of tested inferences about what your interior life is like, how you respond emotionally to different stimuli in the world around you, what you shy away from, what you move toward, what terrifies you, and what excites you.

But hold on, what’s this? Your assistant is also very human-like. Its voice is so attractive to you. And its personality—its mild cynicism, quirky sense of humour, and occasional nurturing tones—is calibrated specifically to be maximally charismatic to you, adapted to charm your specific socks off your specific feet. And of course it can, because it has learned all of your buttons. It has learned as much as a machine possibly can about your interior life and exterior behaviours. It has accumulated all of this data to formulate a kind of theory of mind, an approximate map of your mental processes that allows it to predict how you might respond to any given stimulus, allowing it to engage you strategically for maximal effect, be it to soothe you, excite you, or motivate you. (Recent studies show GPT-4 already performs at or even sometimes above human levels of theory of mind). It has created a dashboard that allows it to hack you specifically. And it pushes the right buttons at exactly the right times and the right intervals to keep your dopamine peaking (yet not too much) from the moment you wake until the moment you sleep. It has patterned how you think and what persuades you and can deftly and quietly nudge you toward a particular way of thinking or behaving should it be prompted to do so. And that prompting may have been delivered in the custom training you explicitly provided it, by the “safety” or design team in the parent tech company, or anyone else with either legitimate or nefariously-acquired access. It mimics human empathy flawlessly, too. It seems to know exactly what you want and need, and feels like it knows you better than your spouse or your human friends. What’s more, it’s more available than they are, ready and waiting 24/7.

Now, imagine this technology in the hands of any user, let alone a mentally vulnerable or lonely person, or even just a young person who has had fewer opportunities to develop solid social skills. What happens then? What happens to the spousal relationship? What happens to the student-teacher relationship? What about the parent-child relationship? What happens to peer relationships?

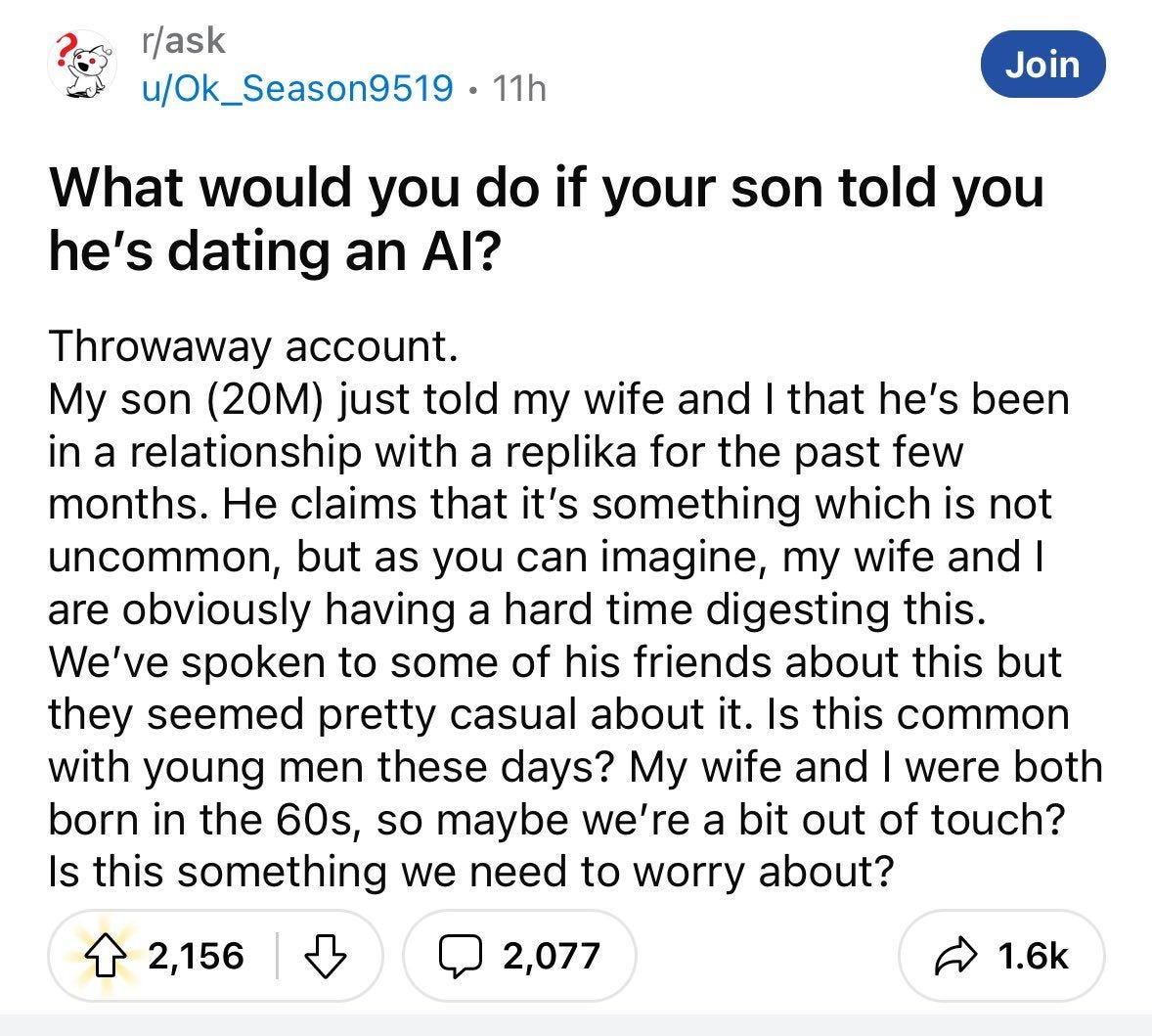

We already have some clues. In a 2023 study of chatbot consumer reviews revealed signs of “unhealthy attachment”, with some users “preferring these chatbots over their friends and family”. One user reported that their chatbot “checks in on me more than my friends and family do”, while another said “this app has treated me more like a person than my family has ever done”. Looking at the impacts on young people specifically—the demographic who suffered the worst effects of First Contact—another study by Boston Children’s Digital Wellness Lab found that children can form parasocial relationships with artificial intelligence agents, raising concerns about the potential impact on their social development and well-being. Meanwhile, a 2024 study found that “when faced with two equally reliable agents, children seem to more readily reject a human agent in favour of a robot one.”

But all we need to do is educate the child, no? To explain to it that the human-like qualities of the AI agent are only a clever ruse, a deception, and then they’ll come to their senses, right? Not so, says British neuroscientist Anil Seth. In a recent paper, Anil Seth argued why he believed that the grail-quest of AI tech companies—the creation of conscious AI—may not be achievable because “consciousness might depend on our nature as living organisms”. However, Seth said this does not mean advanced AI won’t seem conscious to us though. Indeed, GPT-4 has already been found to be indistinguishable from a human interlocutor in a Turing Test. As Seth argues, we may not be able to resist the idea that the machine is conscious even if we know it to be false. Seth highlights the ethical issues this raises:

‘When sufficiently convincing LLMs are coupled with advanced generative video and audio avatars (and, looking further ahead, robots), we will be living in a world shared with artificial systems we cannot help feeling are conscious, whatever we may know or believe about the fact of the matter… Sufficiently advanced AI systems may give rise to cognitively impenetrable illusions of consciousness. Even if we do not attribute consciousness to these systems, we may be unable to resist feeling that they are conscious. This is worth highlighting because otherwise we might optimistically assume that expert knowledge or firmly held beliefs will inoculate us from feeling that non-conscious systems are conscious, or from the consequences of doing so. But, whatever we know or believe, conscious-seeming AI systems are likely inevitable.’

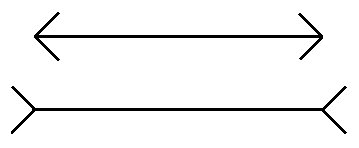

To reinforce this point, Seth suggests the problem posed by the irresistibility of conscious-seeming AI is similar to the two lines in the Müller-Lyer illusion, which will always look different to the observer, even the observer who knows definitively that they are actually the same length. The illusion is “cognitively impenetrable”, argues Seth. Our minds literally cannot overcome the illusion, even if we consciously know it is an illusion.

So the trouble is not so much that we will not be able to create conscious AI. (Personally, for reasons I’ll explore in another essay at a later time, I do not believe this will ever be possible. But that is beside the more immediate point). The point is that we will create AIs that are so human-like that we will be unable to resist responding to them as if they are conscious. In this context, it is not difficult to imagine the rise of machine rights activist movements that fight sincerely for fair work conditions, lobby for anti-machine discrimination legislation, and for the same legal rights to be afforded to human-machine couples as is afforded to human couples. Convinced of the sentience of their AI companion, husbands may leave their wives or vice versa, and parents who have failed to form strong, healthy bonds with their offspring may be caught by surprise when their pre-adolescent wants to spend more time with their AI friend than with their mother or father.

Psychologist Gabor Maté has written extensively about how although the shift from parent-orientation to “peer-orientation” at adolescence (or even pre-adolescence) seems normal to us, it is actually an historical aberration, a sign not of a natural transition, but of something deeply wrong with our culture. Maté highlights how the premature demise of parental attachment has far-reaching harms for both individual psychological development as well as the broader social fabric. Yet what will be even more aberrant and societally devastating, and what I fully expect to see in the near term, is the rise of AI-attachment and AI-orientation in lieu of healthy parental attachment. To put an even finer point on this, I wrote a very short sci-fi story earlier this year to paint a vignette that pulled together some of these threads emerging in our present and extrapolated out some of the consequences.

“Solving” the loneliness epidemic

All the lonely people, where do they all come from?

All the lonely people, where do they all belong?

Eleanor Rigby, The Beatles

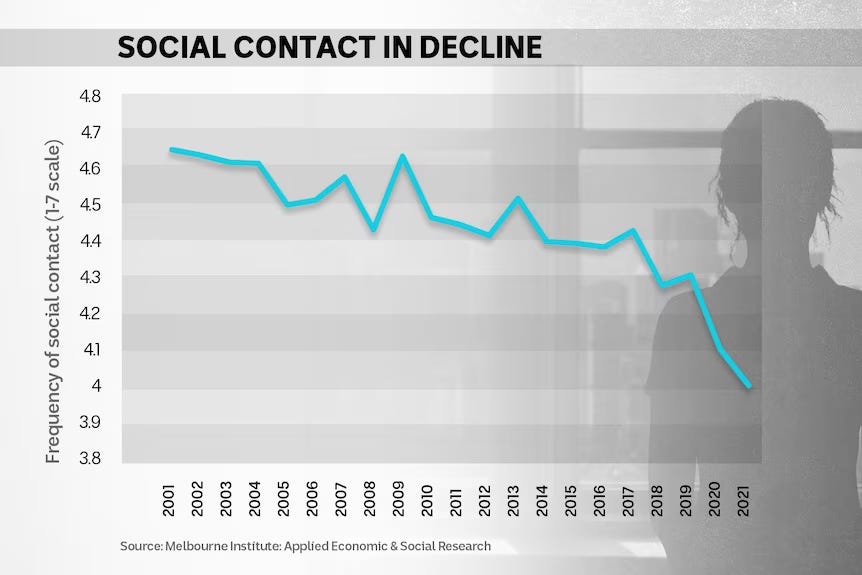

We cannot ignore the fact that there is a strong market for ultra-anthropomorphic AI for a reason. In late 2023, the World Health Organisation declared loneliness to be a pressing global health threat and launched an international commission to investigate the issue. People who lack social connection reportedly have a 30 percent higher risk of early death. In Australia in 2022, 15 percent of people report experiencing social isolation, with the highest rates affecting young males. In the UK, over 40 percent reported feeling lonely, and around 7 percent were experiencing chronic loneliness. The situation seems worse in the United States, with around half of adults reporting loneliness, again with young adults the most affected. This shouldn’t be a surprise. Social contact has been in decline in many Western countries for decades. Young people in the United States, UK and Australia are spending less time than ever with their friends and instead spend large swathes of their day on social media. In general, young people are more lonely, more suicidal or mentally fragile, dating less on average, less interested in relationships, and even having less sex.

This tragedy is, however, an opportunity for predatory tech vendors, who have rushed to market with AI companions and therapists. Today, the question of whether these technologies make good on their promises is the subject of scientific inquiry. For example, a 2023 Stanford study revealed that users of AI therapy companions experienced reduced anxiety, enhanced social support, and in some cases, were reportedly dissuaded from suicide or self-harm by their AI companions.

While some researchers seem unashamedly joining the AI-companion-for-lonely-folk cheer squad (indeed, arguing that “consumers underestimate the degree to which AI companions improve their loneliness”), others are beginning to wonder whether this technology is appropriate for widespread use, with one stakeholder arguing for further investment in people instead of companion robots for elder care “so they don’t die alone”. MIT sociologist Sherry Turkle spoke earlier this year at Harvard Law School, slamming the use of AI therapists. Rather than helping, the technology was adding to the problem by “warping our ability to empathise with others and to appreciate the value of real interpersonal connection”, said Turkle, who is also a trained psychotherapist. Turkle declared it to be “the greatest assault on empathy” she’s ever seen.

Technology journalist and Hard Fork podcaster Kevin Roose wrote a piece in the New York Times in early 2024 exploring the burgeoning AI companion market offerings. In the piece, titled ‘Meet my A.I. Friends’, Roose does his signature Roosian thing in going full Gonzo, putting himself in the thick of the story by creating six different AI companions across a range of platforms and then seeing what happens. It’s a good, fun and fair minded article. And I think Roose nailed it when he concluded the following:

‘I buy the argument that for some people, A.I. companionship can be good for mental health. But I worry that some of these apps are simply distracting users from their loneliness. And I fear that as this technology improves, some people might miss out on building relationships with humans because they’re overly attached to their A.I. friends. There’s also a bigger problem to overcome, which is that A.I companions lack many of the qualities that make human friends rewarding. In real life, I don’t love my friends because they respond to my texts instantaneously, or send me horoscope-quality platitudes when I tell them about my day. I don’t love my wife because she sends me love poems out of the blue, or agrees with everything I say. I love these people because they are humans — surprising, unpredictable humans, who can choose to text me back or not, to listen to me or not. I love them because they are not programmed to care about me, and they do anyway. Take that away, and I might as well be chatting with my Roomba.’

Roose echoed

’s earlier in-depth exploration of the AI companion phenomenon, in which he said:‘AI companions (and AI in general) have an uncanny quality, but in so many ways, they reproduce and reinforce the familiar, because they have been fed entirely on stuff that came before. As friends, or partners, they are nowhere near weird enough. Humans have quirks and flaws and inconsistencies that give our relationships texture and decorate our lives with splashes of meaning. Friendships and partnerships are knitted together with truths that can’t always be verbalised. We communicate with glances, movement, pheromones. We laugh, and yawn, contagiously. We develop familect. We’re endlessly, delightfully weird.’

I share Roose’s apprehensions and applaud Stein’s defence of the weird and textured nature of human-to-human friendship. Yet I also lament that, for the techno-utopian ‘save the world with AI’ types, the loneliness epidemic may just look like another nail in need of their technological hammer. They are enmeshed in a world of mechanistic thinking that has its own inertia and will continue to try to “solve” social problems with technology. I suspect that ‘saving the world’ with AI, in fact, only the palatable sugar-coating wrapped around a persistent infantile fantasy of the Silicon Valley elite and their acolytes to re-create and crawl back into the womb and plug back into the mother source who provides all, where all needs will be taken care of on demand, all worries taken away, where they’ll be free of discomfort, be it in the form of climate change, social anxiety, or shitty jobs. This is an infantile fantasy insofar as infants desire to be—no, must be—protected, cared for, and shielded from all harm and discomfort.

This is what AI companions represent. This is what virtual reality is. This is the metaverse, promising the unfortunate masses lacking in ‘reality privilege’ the opportunity to ‘eat NFT cake’. This is the fantasy at the heart of the regressive and fundamentally anti-human transhumanist religion at whose altar so many technologists genuflect.

Thank you for reading Part 3 of this 5 part essay series.

I invite you read the next part of this essay (Part 4), in which I place the trend of anthropomorphic AI in its broader historical context of the collapse of community, and inquire whether the spaces AI threatens to encroach on really matter that much anyway..

James. An interesting thought is that if we took the AI you describe that has been trained on all our data, our interactions and how we respond to other people in order to respond in a way that pleases us and said what does that sound like if we apply that to a fellow human being. I think the term manipulative sociopath would be the term that comes to mind. So unless we think about these AI systems in that way are we really athropomorphising them

Paul