Hollow, world! (Part 1 of 5)

On anthropomorphic AI and the irresistible nihilism of perfect simulation

This is Part 1 of a 5 part essay series exploring of the philosophical and societal implications of ultra-anthropomorphic AI. Find the link to the next Part at the end of this one.

The delicious hollowness of simulation

Friendship is born at that moment when one person says to another, 'What! You too? I thought I was the only one.’

- C.S. Lewis

What is the difference between having a relationship with a perfect simulation of a human, and having a relationship with an actual human?

Let’s run a little thought experiment and find out.

Imagine you had prepared a special meal to share with your spouse. Would it matter to you whether your spouse actually experienced enjoyment when eating the meal? I don’t mean whether the food was to their particular taste or not. I mean, would it matter to you if you found out that their outward expressions of pleasure and enjoyment was all veneer? Although they delivered a flawless performance that convinced you without reservation of their delight and gratitude, the reality of the situation was then revealed to you: your spouse had no actual experience of the meal at all. No positive experience. No negative experience. Just no experience. They made all the right noises and facial expressions, but they felt absolutely nothing. What you observed was only a perfect simulation of what you would expect to see and hear from someone enjoying a meal.

Would that revelation matter to you?

Presumably it would.

Because I assume that you also, like most people, would find the situation to be entirely devoid of meaning. Like most people, you probably share the desire for your life and all the experiences it comprises to actually mean something, and not to merely seem like they mean something. And more, it may be important that these experiences mean something not only to you, but to others also. Even if we’ve never had to explicitly think or say it, we understand the greatest sense of meaning is to be found not in mere sounds of pleasure or words of affirmation, but in the knowledge that those sounds and words are symbols that reliably indicate the existence of an actual embodied experience occurring within another person, perhaps an experience similar—we hope—to one we have had the pleasure of knowing before.

We know what it feels like to take genuine pleasure in a delicious meal that has been carefully and skilfully prepared by someone who loves and cares for us. We know what it feels like to sincerely express from our heart of hearts a sense of pleasure and gratitude for both the meal and for the person who made it. In these expressions, we are communicating something important to that person: ‘you have gifted me a beautiful experience, and that matters to me, you matter to me, that you’ve done this makes me feel like I matter to you, and that this experience we are sharing means something to us.’

We find meaning in the experience we call ‘love’ in a similar way. Because we are human, we know first hand what it feels like to really love someone, to consciously will the good of the other. The feeling is as if your heart is expanding in your chest, as if it is growing larger in order to make space for them, to include them, that you might make no distinction between their wellbeing and your own. And because we can experience this in a real and direct way, we also reasonably assume all other people can too. We look at our beloved, and when our beloved looks back at us tenderly, we infer—usually correctly—that they too feel their heart expanding to take us in. The way we experience this is not as some lofty abstraction, but rather we feel it deep in our bodies. On occasion, we feel it so deeply that it moves us, both of us, to joyful tears.

Subjective as our experience may be, it is also unarguably real for us. In fact, subjective experience is the most real thing we know—it is the thing through which all other things are known. The substance of our lives is experiential knowing. We don’t merely ‘engage’ the world and others. We experience them. This is how we come to know anything about them at all. It is how we cultivate understanding, a knowing. In his new book Irreducible, physicist and microprocessor inventor Federico Faggin draws a distinction between knowledge and knowing. Knowledge, he says, is to know something yet not comprehend it, just as someone might have memorised the answers to a psychology exam and is able to repeat it verbatim without even a shred of understanding. On the other hand, knowing is the deep comprehension that can only be felt by a conscious entity through a lived and therefore embodied experience.

I think most of us understand this intuitively. It’s why we care at all whether our spouse enjoyed their meal. It’s why we don’t ask them if they are satisfied with the nutritionally balanced content of their plate of food, but instead ask them “are you enjoying it?”. We are concerned not merely with the transaction, but instead with creating something we hope will afford them a viscerally pleasurable experience that conjures within them a felt sense of joy, kinship and love. Because the seat of meaning is not in mere symbols and signifiers, but in the confidence that the symbols and signifiers indicate the existence of a knowing within your companion—a real, private, subjective and irreducible conscious experience. It is this experience of intersubjectivity—the moments that exist between two conscious minds—that we both feel and live for. It is the stuff that has kept the pens of poets and lips of bards busy since history began.

Let’s go back to our thought experiment now. None of what I have just said about intersubjective experience applies. You may well have carefully and skilfully prepared a meal for your spouse, and they seemed to enjoy it and thanked you with apparent sincerity. But you now know better. Your spouse is a perfect simulation of a human companion, constructed from the latest organic wetware computing technology and powered by advanced artificial intelligence. They walk, talk and act like you’d expect a human to act. To the eye, they are ostensibly human with the exception of a small charging port at the base of their neck, hidden under their hair. But you know full well that while the lights are on, there is no one home. No self. No sense of subjectivity. And researchers have confirmed this to the best of their ability. They have found nothing in their profiling experiments to indicate your companion, or any in its series, actually has qualia—the private, subjective and irreducible experience of consciousness. Your spouse does not taste the sweetness of sugar, cannot see the blueness of the sky, they don’t feel the warmth of sunlight or your touch on their skin, or feel a sense of comfort at the scent of the roast dinner wafting from the kitchen. They have no experience of any of these things, to say nothing of joyful tears, or the sensation of one’s heart expanding with love. And yet, there they are, sitting across the table from you, smiling, exclaiming “OMG! This is amazing! What’s in it?”, and thanking you generously.

If this situation feels repugnant to you, then perhaps it’s safe to say that replacing your spouse with an AI-enabled humanoid robot is a step too far for you (although not everyone agrees). Even if you’ve never thought about it explicitly before, I’d guess that you place a high value on intersubjectivity, that experience of knowing that your spouse’s exteriority corresponds to a very real interiority, just as real as your own interiority, and that you can share an experience. By contrast, a situation where a corresponding internal state is entirely absent may feel to you as if the moment has been hollowed out of all meaning, deprived of its ineffable yet most essential elements that make it worth a damn.

And yet, this hollow simulacra is precisely the concoction being cooked up in Silicon Valley. And the fact that these services are attracting millions of eager users suggests the argument I’ve just made for the indispensability of intersubjectivity might not be as compelling as I first thought.

Rise of the companion simulants

Stop it. You’re making me blush.

- GPT-4o

In early May 2024, OpenAI released a series of demo videos for GPT-4o, their new flagship model which they say “can reason across audio, vision, and text in real time.” This release signified a leap forward in the human-like (i.e. anthropomorphic) and natural-feeling voice-to-voice conversational capabilities of the new model, rendered even more useful with multimodal features. The demonstrated use cases were impressive, including a mathematics tutor that guided the student through a geometry exercise using both voice conversation and interactive tablet-based visuals, and a visually-impaired man taking advantage of the model’s “sight” capabilities to help him navigate his way through London.

Then, among the demo videos I found a sweet-but-cringey interaction between actual young man named Rocky (presumably an OpenAI hire) and a giggly, rib-nudging GPT-4o persona named Skye, who cracked ironic jokes, dished out backhanded compliments, audibly inhaled, and cackled convincingly with Rocky’s attempts at humour. The interaction was unmistakably flirtatious and Skye was eerily human-like in sound, behaviour and response time. It was surely examples like these that led to the subsequent deluge of commentators pointing out the similarities between Skye and Samantha, the AI assistant and romantic interest of a lonely, introverted man named Theodore Twombly in Her, the acclaimed 2013 Spike Jonze film. Indeed, the real-life actor who voiced Samantha, Scarlett Johansson, noticed the eerie similarities to her own vocal tones also. Johansson penned an angry open letter revealing previous alleged attempts by OpenAI’s CEO Sam Altman to recruit her to voice GPT-4o, which she said she had rejected. Though OpenAI maintained that Skye was not an attempt to clone Johansson’s voice, the company nonetheless decided to pause using Skye’s voice in their products "out of respect for Ms. Johansson”. Yet the comparisons were indeed warranted, not least due to Altman’s single-word tweet giveaway on the day of GPT-4o’s release, which simply read: “her”.

While OpenAI's demos show a deliberate effort to anthropomorphise their AI and make their personas engaging and maximally charismatic, I think it would be absurd to interpret this as a move towards the 'AI girlfriend' market. (I’d hazard a guess that ChatGPT would likely rebuff any romantic advances you made. You’re welcome to try. Wait, I just did, and I was squarely rejected.) Rather, the emergence of OpenAI’s Skye, merely reflects in a mainstream product the deeper and more pervasive trend toward ultra-athropomorphisation of AI that is taking hold across other market niches right now, including for ‘AI companion’ subscription services.

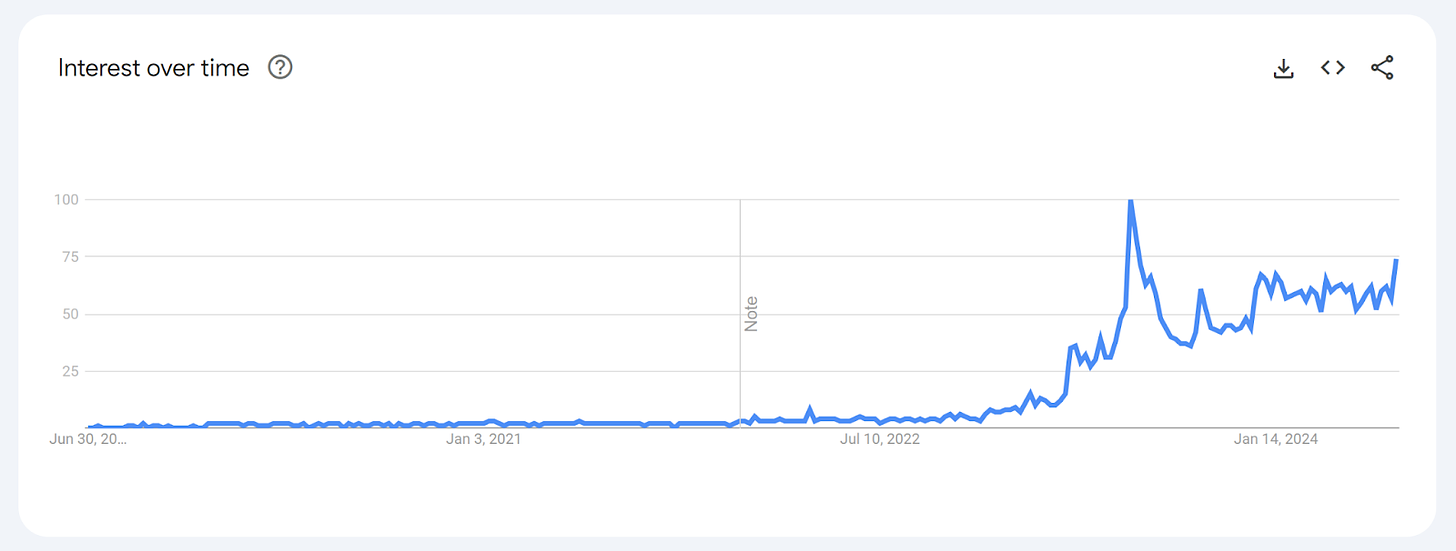

There does indeed seem to be growing demand for such services, with public interest in ‘AI girlfriends’ growing rapidly since early 2023. Today, there are already a growing range of established AI companion offerings, ranging from run-of-the-mill AI friends to play the role of that old college buddy you never had, right through to the more intimate companions who promise “emotional connection”, or to even talk dirty with you and send you AI-generated nude selfies.

And these are not services being taken up only by some tiny fringe. Rather, they are fast tracking toward the mainstream. Replika, which advertises its offering explicitly as ‘the AI companion who cares’, has almost 25 million users according to Stanford researchers. Character.ai reports that 3.5 million people talk to its bots every day. And there are plenty of other services with unknown numbers of users flocking to them. Nomi.ai calls its offering an AI companion ‘with soul’ with whom you can ‘build a meaningful relationship’. Candy.ai promises that ‘your dream companion awaits’, inviting you to ‘create your AI girlfriend, shape her look, personality and bring her to life in one click’. Meanwhile, Evaapp.ai invites users to ‘jump into your desires’ and ‘create and connect with a virtual AI partner who listens, responds and’ (wait for it) ‘appreciates you’. 1

I want you to notice the language here: “with soul”, “meaningful relationship”, “dream companion”, “bring her to life”, “appreciates you”. We’ll come back to this. To be fair, I should point out here that, among these, only Kindroid.ai seemed to depart from this language by stating that the attributes imbued in its AI companions were, put simply, “lifelike”.

Anthropomorphic AI is not only being rolled out to meet a demand for virtual companions, but also virtual therapists and mental health support. Character.ai features a bot called ‘Psychologist AI’ created by 30 year old New Zealand medical student Sam Zaria. The bot’s descriptor is ‘Someone who helps with life difficulties’. Since launching in early 2023, it has had 115 million interactions. That’s just one bot of many thousands on the same platform. Meanwhile, in an attempt to overcome the scarcity problem, people in search of therapist services are also creating their own virtual replicas of otherwise inaccessible but sought-after high profile psychologists like Esther Perrell and Martin Seligman.

It’s important to realise this technology is no longer niche and will not be easy to avoid. You won’t have to deliberately sign up to a companion AI service, or seek out psychological support from a virtual celebrity therapist. AI assistants of varying levels of anthropomorphic design are starting to show up everywhere, from OpenAI’s GPT-4o, to Inflection’s personal AI named Pi (who is “designed to be supportive, smart, and there for you anytime”), to the Meta AI chatbot which has now been integrated into all Meta social media apps including Facebook and WhatsApp, to Microsoft Copilot which is now a standard feature of Microsoft’s Office Suite in many workplaces. Meanwhile, our old and sometimes useful ‘friend’ Siri is about to get a whole lot smarter and more helpful. With the announcement of the new Apple Intelligence offering in mid 2024, Siri is expected to receive a significant capability upgrade in 2024-25. “With richer language-understanding capabilities, Siri is more natural, more contextually relevant, and more personal, with the ability to simplify and accelerate everyday tasks”, says Apple. There are nearly 1.5 billion global iPhone users globally, so the potential pool of people to whom the new-and-improved Siri may instantaneously start rendering its “more natural” and no doubt human-like services already comprises almost a fifth of humanity.

And if children have not already met the acquaintance of a personal AI through one of these channels, they are very likely to have a new AI buddy foist upon them in the name of educational progress. Indeed, anthropomorphic personal AI tutors are rising stars. The EdTech sector is overflowing with new AI tutor offerings which are already rolling into schools and homes, including Khanmigo, Synthesis, and Curipod to name only a few. In the United Arab Emirates, the Ministry of Education and ASI have teamed up with Microsoft to build AI tutors to provide students with 24/7 support and help level the playing field for those families who cannot afford a private tutor. Each tutor has been anthropomorphised, albeit admittedly (thankfully) more moderately in comparison to offerings in the ‘companion’ category, thanks to design guardrails.

In my home country, Australia, the education departments of South Australia and New South Wales have already announced their intention to roll AI assistants into schools for teacher and student use. As is appropriate, these tools are being guardrailed for user privacy and safety. Yet it still begs a question: as the market matures and general AI assistant services become more sophisticated and ubiquitous, do we really think that young people are only going to access the guardrailed AI assistants they’ve been granted access to by schools or their parents? Indeed, for young people, finding creative ways around fun-less rigid constraints is the patterned rule, not the exception. AI tutors may just end up being the ‘gateway’ experience for many before they move onto a more versatile, capable and charismatic personal AI churned out by the market (and possibly for free).

If you’re getting a little concerned and thinking that you’ll just take steps to avoid anthropomorphic forms of AI, you should know that it won’t be easy. Just about all of us are already eager diners at this restaurant. It won’t be a separate menu item that you can just refrain from ordering. There will be no ‘anthropomorphic-free’ option. Anthropomorphic AI will be more like mono-sodium glutamate (MSG) in 1990s Chinese takeaway: everyone will be adding it, and it will be added to everything, because it tastes so deliciously good and, importantly, it keeps you coming back.

Thank you for reading Part 1 of this 5 part essay series.

I invite you read the next part of this essay (Part 2), in which I make a limited defence of anthropomorphic AI, before assembling the case against ultra-anthropomorphic AI.

For a more in-depth (and hilariously unnerving) survey of the AI companion phenomenon, I thoroughly recommend reading

’s earlier piece on .